By Jagsir Singh, Co-Founder, Noir Dove

A few days ago, I sat down with a healthcare strategy director at one of the global management consulting firms. He started as a practising doctor, moved through hospital administration, and has spent the last decade advising CEOs of large hospital chains, PE-backed healthcare deals, and digital health platforms across India, the Middle East, and Africa. His track record includes the commissioning of one of Mumbai’s largest tertiary care hospitals and turnaround work that took a hospital chain from flat growth to a 4x outcome for its private equity sponsor. He has sat on both sides of the table, the one ordering the angiogram and the one signing off on the cost-reduction plan, which is why his read on what is happening right now matters.

Two patient stories came up in that conversation. Neither will make headlines. Both should worry anyone betting on AI to fix healthcare.

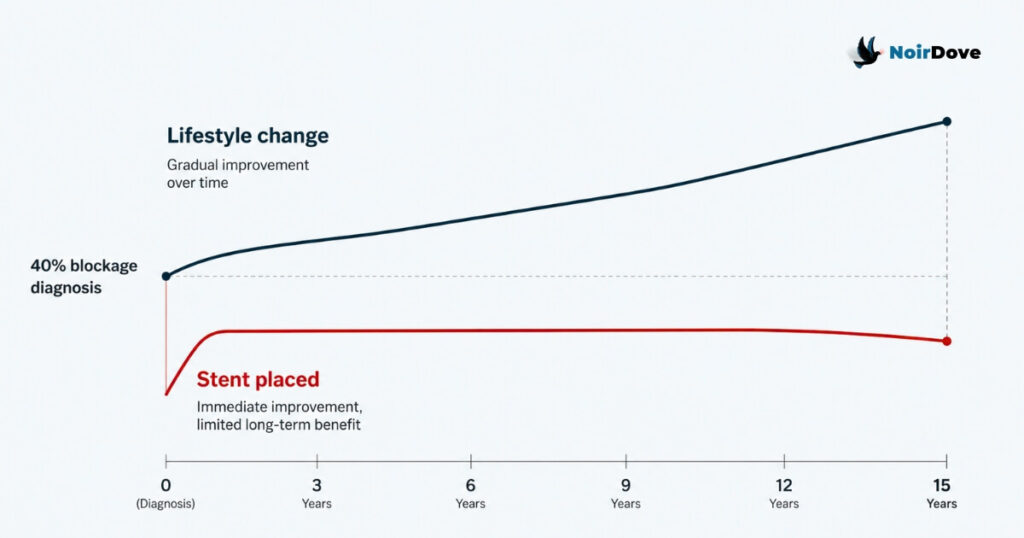

The 40% Blockage That Got a Stent

A 50-year-old man walked into a cardiac unit. His angiogram showed 40% blockage. The surgeon placed a stent.

For decades, the clinical consensus on 40% blockage has been simple: manage it with lifestyle changes. Diet, exercise, weight, stress, sleep. Patients who follow this path have historically added 10 to 15 years to their lives. A stent at 40% does not extend life. It offers short-term comfort without changing the underlying disease, and unless the patient also changes how they live, the quality of life does not improve either.

So why was the stent placed? Because the hospital had targets. Because the cardiac unit had a revenue line. Because consultants were under pressure to keep the cath lab busy. The patient walked in trusting clinical judgment and walked out as a billing entry.

Now layer AI on top of this. A recent argument doing the rounds in healthcare circles says doctors should “take a second opinion from AI.” The logic sounds sensible until you ask what the AI is being trained on. If it is trained on the angiogram, the procedure code, and the discharge summary of that 50-year-old, it will quietly learn that 40% blockage warrants a stent. It will not learn that the decision was driven by quarterly numbers.

Multiply this by every cath lab in every chain hospital across India and the United States. The training data is not neutral. It carries the fingerprints of every cost-revenue pressure that shaped the original clinical call.

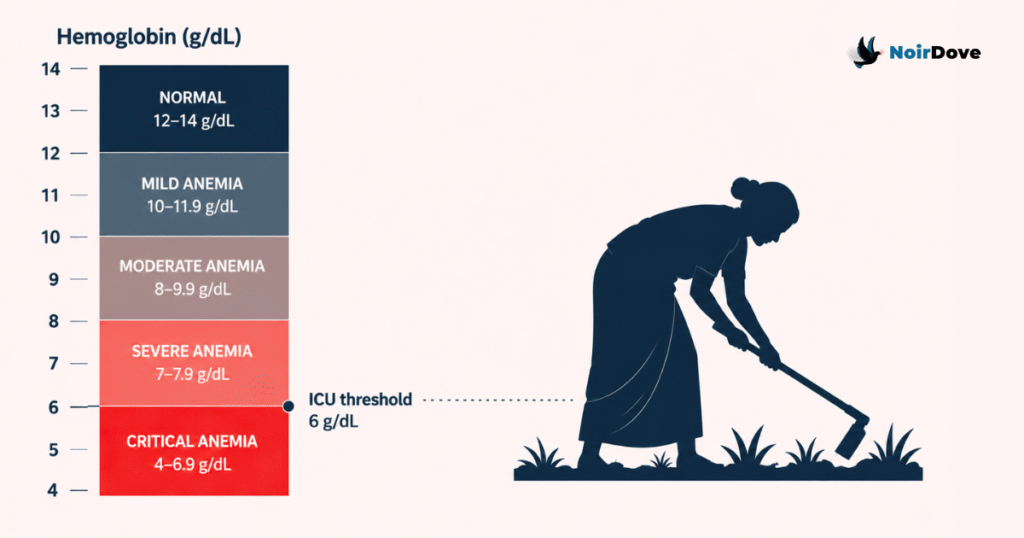

The Farmer With Hemoglobin of 6

The second case was a 50-year-old female farmer in a rural district. She came in with low energy and fever. Her diagnosis: anaemia. Her hemoglobin: 6 g/dL.

At 6 g/dL, the textbook says ICU admission. Blood transfusion. Cardiac monitoring. This is a patient whose heart is working overtime to push thin blood through the body. And yet, she had been working in the fields. Sowing, weeding, lifting. Functioning.

One of two things is true. Either the clinical parameter that says “6 g/dL means ICU” is wrong for a large population that has adapted over decades of nutritional deficit, or the parameter is right and the entire diagnostic framework is missing the contextual data that explains how she is still standing.

Either answer is uncomfortable. The first one challenges decades of pharmaceutical research, iron supplementation protocols, and the global anaemia industry. The second one says the data we are feeding into clinical systems is incomplete in ways nobody is auditing. Nobody challenges these parameters because too many supply chains, drug pipelines, and treatment protocols are built on top of them.

This is the kind of structural problem that never makes headlines. It just compounds quietly, year after year, patient after patient.

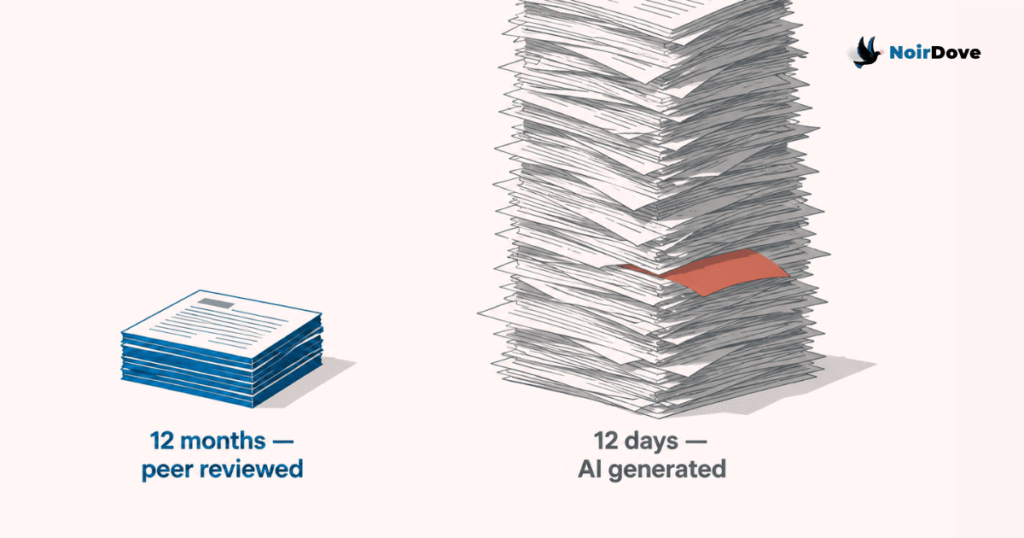

Peer Review Used to Be the Brake

There is a third layer that makes this worse. A medical paper used to take six months to a year to get published. Peer review was slow because it was supposed to be slow. Reviewers pushed back on weak methodology. They asked for context. They flagged confounders. The delay was the quality control.

AI has compressed that cycle. Papers can now be generated, summarised, and distributed in days. The bottleneck that protected the knowledge base has been removed in the name of speed. The stent example and the hemoglobin example are exactly the kind of nuance that a thoughtful reviewer would surface and a fast-publishing pipeline would miss.

Bad data plus fast publishing equals knowledge systems that drift in the wrong direction faster than anyone can correct them.

The CEO Mandate Nobody Talks About

Here is the part of the conversation that stayed with me longest.

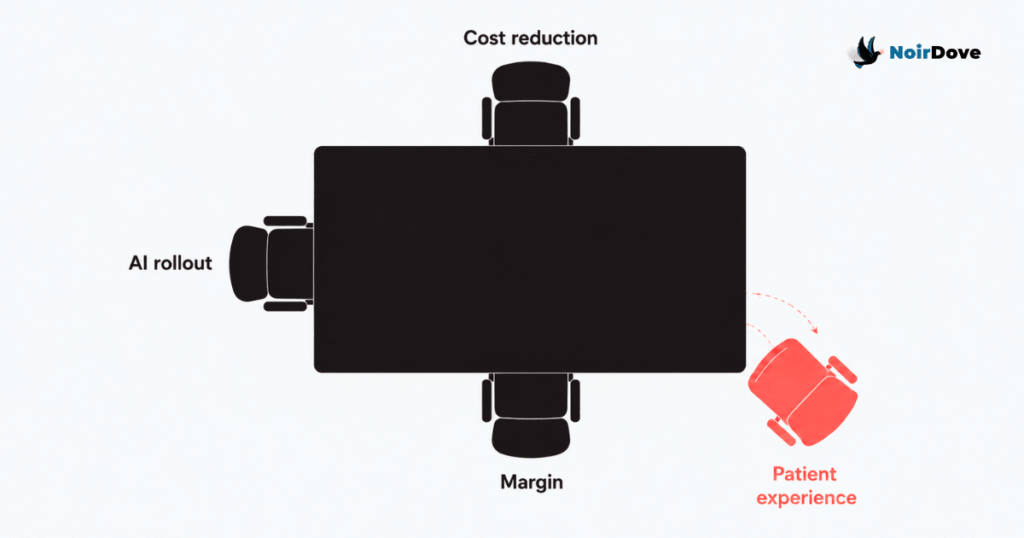

Almost every healthcare CEO he advises right now has been given one mandate by the board: reduce costs over the next two years. Not improve outcomes. Not raise patient satisfaction. Cut costs.

Tertiary and secondary care has historically competed on two things: clinical outcomes and patient experience. Outcomes are hard to differentiate at scale because most large hospitals get to roughly the same place on the standard procedures. So experience became the moat. The nurse who remembers your name. The discharge process that does not feel like a hostage release. The follow-up call that catches a complication before it becomes a readmission.

That moat is being filled in right now. Staffing ratios are tightening. Training budgets are being cut. Front-desk roles are being collapsed into kiosks. The patient experience in most large chains is visibly poorer than it was three years ago, and the people running these hospitals know it.

The justification offered, often quietly, is that AI will fill the gap. AI receptionists. AI triage. AI discharge summaries. AI follow-ups.

This breaks on two levels.

First, the original promise of AI in healthcare was to reduce cost so that human experience could improve. The savings were supposed to fund more time with patients, better-trained staff, faster access. What is actually happening is the inverse. Experience is being cut first, on the bet that AI will eventually compensate. It will not, at least not on the timeline the cost cuts are operating on.

Second, the strategic logic falls apart at scale. If every hospital has access to the same AI models, AI stops being a differentiator. It becomes table stakes. The only thing left to compete on is the quality of the human experience and the relationship with the patient. Which is precisely what is being cut to fund the AI rollout.

So the leaders cutting experience to fund AI are cutting the only competitive ground they have left.

This Is Not a Technology Problem

The framing matters. The stent decision was not an AI failure, it was a revenue model failure. The hemoglobin parameter is not an AI failure, it is a research priority failure. The collapse of patient experience is not an AI failure, it is a board-level capital allocation failure.

AI is being used as cover. It lets leadership talk about transformation while the underlying decisions are about margin. The technology gets the credit when something works and absorbs the blame when something does not, which is convenient for everyone except the patient.

A doctor placing an unnecessary stent, a parameter nobody dares to challenge, a peer-review process being bypassed, and a CEO cutting nursing ratios to fund a chatbot. These four things look unrelated. They are the same problem at four different layers, and AI is being trained on, accelerated by, and used to justify all of them at once.

The question every healthcare leader should sit with is not whether to adopt AI. It is whether the data they are feeding it, the assumptions they are not challenging, and the experience they are cutting to pay for it leave them with a hospital worth running in five years. Most boards have not asked this question yet. The few who have are not getting answers they like.

For founders building in healthcare and healthtech, the GTM read is this: the next 24 months will reward companies that position against this drift, not with it. If your product helps a hospital cut costs while quietly degrading patient experience, you are selling into the wrong half of the market and your churn will tell you so by month 18. The buyers worth winning are the CEOs, COOs, and chiefs of clinical operations who already know experience is the only moat left, and who need tools that defend it rather than hollow it out further. Build for them. Position around outcomes and experience, not efficiency theatre. Lead with the contextual data layer that the current AI conversation is missing, the kind that would have flagged the stent and questioned the hemoglobin reading. The companies that bake clinical context, peer-review-grade validation, and experience metrics into their product story will close the deals the cost-cutting vendors lose in year two. Everyone else is selling a tool that helps hospitals dig the hole faster.